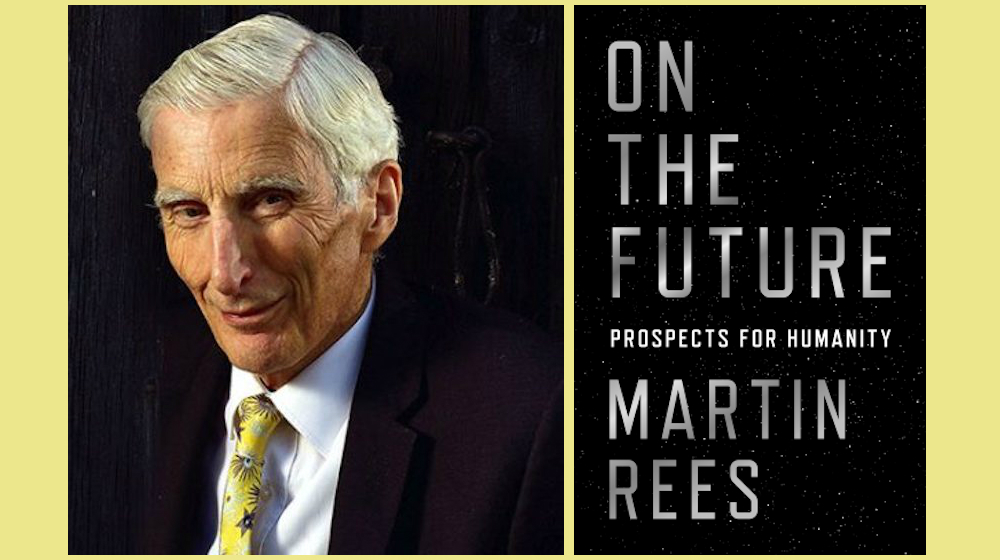

How might billion-year cosmological horizons get shaped by decisions human make in the next few decades? How might future-oriented scientific engagements best address present-day social disparities? When I want to ask such questions, I pose them to Lord Martin Rees. This present conversation focuses on Rees’s book On the Future: Prospects for Humanity. Rees, a cosmologist and space scientist, is based in Cambridge, where he has been a Research Professor, Director of the Institute of Astronomy, and Master of Trinity College. He was President of the Royal Society (the UK and Commonwealth’s academy of science) from 2005 to 2010. In 2005, he was appointed to the UK’s House of Lords. He belongs to numerous foreign academies, and has received many international awards for his research. He writes and lectures extensively for general audiences, and is the author of nine books, including: Before the Beginning, Just Six Numbers, Our Final Century, Gravity’s Fatal Attraction, and Our Cosmic Habitat.

¤

ANDY FITCH: I find particularly useful On the Future’s attempts to articulate the most constructive balance of “accelerating some technologies but responsibly restraining others.” So in terms of the book’s Prospects for Humanity subtitle, could we take, as one example of an emergent technological capacity combining both promising and ominous prospects for humanity, possibilities for targeted gene-editing to combat specific diseases, but also for more comprehensive genetic recoding to produce “designer babies”? And could we start to talk through the types of public discussions we do have, and the types of public discussions we should have, in advance of technological developments forcing our hand and reshaping human society without adequate reflective deliberation? How might such deliberation best help to reduce risks that scientific advancements can bring, without “putting the brakes on technology”? How might such deliberation in fact enhance our prospects for directing technological innovation all the more urgently?

MARTIN REES: Bio-tech will be a hugely vibrant field in the coming decades. But already it has raised problems of both ethics and prudence in how we apply this technology, and how we regulate it. Already we have the ability not only to sequence the human genome, but to start editing and synthesizing genomes. This has led to important ethical questioning. Recently a Chinese scientist modified a human genome to make babies less vulnerable to HIV. Critics roundly attacked this project, complaining that the benefits didn’t counteract the risks. These critics considered that experiment quite different from earlier cases, where scientists had edited out a single gene making babies vulnerable to a particularly damaging condition like Huntington’s disease. So already we have seen cases where genetic editing seems appropriate, and where it does not.

Going back 10 or 20 years, we had debates over genetically modified crops, with different outcomes in different countries. In the US you stayed fairly relaxed about this, whereas in Europe a much greater reluctance to allow GMOs emerged. In Europe the precautionary principle (the idea that one should do nothing unless one can ensure no serious downsides will occur) carried far greater weight. There was a standoff between environmental campaigners on the one hand, and Monsanto (an aggressive bio-chemical company) on the other. That led to a polarization of public opinion before any consensus could be built. And today Europe still has a strict regime opposing GMOs, even though you in the US have performed a huge controlled experiment on over 300 million people for a decade or two, with no manifest great harm emerging. So on that particular case I believe Europe has been overcautious.

We also have seen bio-ethics debates around the treatment of human embryos. A celebrated 1984 report by the philosopher Mary Warnock guided the UK towards a broadly acceptable system for regulating experiments that use human embryos. We had a good dialogue between parliamentarians and the public and medical experts, leading to a consensus to allow experiments on embryos up to 14 days old, but not beyond. That compromise still stands. And obviously it doesn’t satisfy all Catholics, but I still think we did a fairly good job.

Geneticists are now acquiring much greater insight not only into single-gene human characteristics, but into characteristics determined by many, many genes. By analyzing many thousands of human genomes, we will learn which combinations lead to intelligence and other desirable traits. It also will become possible to synthesize a genome that replicates these characteristics. So, in principle, one can see the prospect of so-called designer babies in our future. This clearly will raise all kinds of ethical issues. To what extent should we allow such modifications? We need to start these discussions long before the technology for these modifications actually becomes feasible.

And bio-tech raises other worries. For example, in 2011, at the University of Wisconsin and at Erasmus University in Rotterdam, experiments showed a surprisingly easy method for making the influenza virus both more virulent and more transmissible. These so-called gain-of-function experiments did not get funded further by the US government after 2014, on the grounds that such dangerous knowledge could be misused, either by error or by design. Those conducting such experiments had argued that understanding the influenza virus well enough can help us to stay one step ahead of its natural modifications. But others advocated putting the brakes on this and other bio-technologies.

In my book I contrast that 2011 situation with an important meeting held in Asilomar, California in the mid-1970s, in the early days of recombinant DNA. At this meeting, experts discussed whether to impose a moratorium on certain kinds of potentially risky experiments. They took a rather cautious line, and the limited number of groups active in the field at the time obeyed this moratorium. But things look quite different now, with new technologies studied all over the world, with strong commercial pressures, and the relevant equipment widely available in laboratories. It will be near-impossible to enforce regulations globally (just think of our problems enforcing drug laws or tax laws). So I worry that anything that can be done will be done, somewhere by somebody, whatever the regulations say.

Still sticking to this book’s Prospects for Humanity subtitle: reading about genetic editing on the individual level, or gene drives on the species level, also pointed me to questions of how you might situate human prospects in a broader ecosystemic context. Do we have to assume that, given a basic interspecies competition for resources, most substantially strengthened human prospects cannot help but diminish prospects for almost all other species on the planet? Or what corresponding advances in humans’ ethical and self-reflective capacities will we need in order to balance some of the dramatic technological breakthroughs you foresee? I’ll want to ask specifically, a bit later, about prospects for more or less eclipsing flesh-and-blood biological life. But first, more generally, how and when should and could interspecies concerns factor into deliberations on appropriate pacings for innovative tech development?

We are clearly having a drastic effect on the entire global ecology. If we had views of the Earth from space, not just for the last 50 years, but throughout its history, we would see a massive acceleration in the rate of change for land use, due to urbanization and agriculture. Indeed, if humanity’s collective impact on land use and climate pushes too hard against what are sometimes called “planetary boundaries,” the resultant “ecological shock” could irreversibly impoverish our biosphere. Extinction rates are rising. We’re destroying the book of life before we’ve read it.

Biodiversity is crucial to human well-being. We will clearly be harmed if fish stocks dwindle to extinction, and if we eradicate plants in rain forests whose gene pool might be useful to us. But for many of us, biodiversity also has value in its own right, quite apart from its value to humans. Indeed, the Pope’s 2015 encyclical Laudato Si’ presents a firm papal endorsement of the Franciscan sentiment that we should cherish all of “God’s creation.”

Already more biomass exists in chickens and turkeys than in all of the world’s wild birds. And the biomass in humans, cows, and domestic animals is 20 times that in wild mammals. This trend may continue. But of course there also may be a reaction against the massive cruelty of factory farming. When I was asked recently to predict changes in social attitude in the coming decades, my most confident response was: “More vegetarianism, and more euthanasia.”

Returning then to fundamentally human concerns, your book takes as one of its basic premises that today’s border-crossing interconnectedness makes global disparities in income, health, and quality of life increasingly unsustainable — and with imminent advances in genetics and medicine threatening to escalate these disparities much further much faster. Here, as one particular point of focus, could we consider prospects over the next few decades for significantly extended human lifespans, and start to sketch the implications for domestic and international equality / justice / stability as these no-doubt beneficent health developments spread unevenly across the globe?

So first, to take one step backwards, I think we’d all agree that bio-medical advances over the past 50 or 100 years have benefitted the developing world even more than the developed world. Life expectancy in developing countries has risen very fast indeed. In terms of health and welfare and freedom from disease, global inequalities have diminished thanks to these innovations in medicine. But as you suggested, the reverse might happen in the future, if the main new advances remain extremely expensive and available only to a privileged few, while leaving the poorest behind. This could lead to a fundamental kind of inequality.

And as you also mentioned, we need to assess these outcomes against a backdrop in which inequalities between nations and regions of the world suddenly appear much more conspicuous, with poorer nations no longer as isolated from international developments. Travel and migration from these countries has become much easier. Even where societies don’t have toilets, they do have mobile phones and Internet connections. So they know what they’re missing, which provides the recipe for a greater discontent and embitterment, and a reduced acceptance of these basic inequalities. In my view, this should give us all a big incentive to help the disadvantaged parts of the world.

And we surely do need a focused research program to investigate what happens as we get older — what happens to our chromosomes, for example. I’m of course not an expert on this topic, but I doubt that we can see yet whether such progress will offer incremental improvements, or whether it will become a real game-changer, in the sense of reformulating ageing as something more like a disease which we can cure. But again, that seemingly positive development would lead to immense societal disruption, and would have serious implications for global population trends.

If such game-changing technologies did develop, it would be crucial to ensure that they were fairly available to all people, and also to ensure that societies could adjust to these changes gradually. And of course, it’s the years of healthy life that we’d like to extend. We don’t want simply to drag on in more years of senility.

In terms of tech-driven global disparities, your book also points to the imperative for post-industrial societies to help ensure that their emerging counterparts do not repeat the personally acquisitive, collectively destructive trajectory of preceding models for social “progress” — and instead leapfrog certain stages of industrial development through both unprecedented technical and cultural advances. You suggest, as one example, the need to envision and implement substantial reforms not just for the nation-state, but specifically with tech innovation helping to maximize stability and sustainability in the developing world’s megacities. So could you describe more broadly here some of the socio-environmental-ethical dynamics most in need of leapfrogging at various organizational levels: from international cooperation, to the nation, to the megacity, to the individual person?

Our global population has doubled in the past 50 years, to around 7.6 billion. It almost certainly will rise to about nine billion by mid-century, and might go up even more in this century’s second half. It seems clear that the world cannot support a population of nine billion people if they all live like present-day middle-class Americans, eating as much beef and using as much energy. But if we do want, by mid-century, to see a world where presently under-developed countries offer a good lifestyle, then they cannot just “track” the ways in which Europe and North America developed. To avoid dangerous climate change, to avoid encroaching too much on natural land and causing massive loss of biodiversity, these countries will need to leapfrog to a high-tech lifestyle much less wasteful of energy and resources.

I don’t consider it crazy to think that we can feed nine billion people and give them a good lifestyle. We will need high-tech agriculture, and perhaps artificial meat and other innovations. But to ensure that poorer countries can develop, we also will need a great deal of altruism on the part of wealthy nations. Countries in sub-Saharan Africa and the Middle East won’t be able to boost their economic development in the same way that South Korea and Vietnam did (by manufacturing with lower wage levels than Europe and North America), because robots will take over manufacturing. That ladder for hugely beneficial and effective economic development has now been kicked away. African countries cannot get out of the poverty trap that same way. We will need to develop some mechanism whereby wealthy countries subsidize and help to promote sustainable development in poorer countries. And of course this wouldn’t just be altruism on the part of rich countries. It’s in their self-interest — indeed, it’s the only way to avoid a dangerous and unstable world, with massive migration on a scale much larger than the boat people and Syrian refugees we see in Europe today.

Similarly, all countries should promote and implement best practices for efficient energy consumption. We all should want to see the cost of clean energy drop dramatically, so that India, for instance, can overcome its current dependence on smoky stoves burning wood and dung by leapfrogging directly to clean energy, rather than building coal-fired power stations. We should accelerate research and development into energy generation, energy storage, and smart grids. In fact, this should be prioritized as highly as medical and defense research. At the same time, we should facilitate Internet-based education and commerce to bring new skills and opportunities to indigenous peoples and to people in the developing world, so that they can earn money by remotely providing services for wealthy countries.

Still with such potential transformations of our global economy in mind, and even as I ask about what should be done in various contexts, I also keep returning to your incisive formulation of a growing gulf “between the way the world is and the way it could be.” On the Future sketches a recurrent scenario in which “scientific and technical breakthroughs…happen so fast and unpredictably that we may not properly cope with them,” in which we fail to meet the basic challenge of harnessing technological benefits while minimizing technological downsides. So here, from a more macro perspective, could you offer a concrete example or two of this “explosive disjunction between…ever-shortening timescales of social and technological change,” and the exponentially expanding stakes of decisions we make which “will resonate for thousands of years” — and which ultimately might impact “billion-year time-spans of biology, geology and cosmology”?

In medieval times, people didn’t expect the next generation to have lives very different from their own. By contrast, we now can’t predict what our children and grandchildren will experience. Smartphones and many everyday appurtenances would have seemed magic just 25 years ago. So we can’t really predict lifestyles in 2050. Again this makes people more reluctant to sacrifice for the long term, because we can’t comprehend what that long term might look like. It might seem topsy-turvy that our planning horizon has shrunk even as our cosmic horizons have hugely enlarged. But these cosmic horizons now stretch for billions of years, whereas cathedral builders in the Middle Ages believed that the world could end in apocalypse after one thousand years.

Again, technological development is empowering, and, if appropriately directed, is really our only way to provide a decent life for all 7.6 billion people. But the fact that more than one billion people still live in destitution and poverty, on less than $2.00 a day, indicates, in my view, a severe moral failing — particularly when the income of this “bottom billion” could be almost doubled by transferring the income yielded by the accumulated wealth of the richest thousand people in the world. That huge inequality illustrates, in a very stark way, the gap between how our world could be and how it actually is. And these huge inequalities prevent us from using present technologies to maximize global well-being.

We also should keep in mind what makes this present century unique. My book takes as one of its major themes that although the Earth has existed for 45 million centuries, this is the first when one species (namely the human species) can shape the planet’s fate for billions of years to come. We have become numerous enough as a species and empowered enough by technology to risk causing irreversible changes to Earth’s habitat. So what we do (in this generation and the next) might resonate across many future centuries, though we are not adequately thinking about this. We think very short-term. Or on a topic like climate change, we certainly do not fail to discuss it, but we have failed to act sufficiently in response to it.

Most politicians, quite naturally, focus on what might happen between now and the next election. Businesses likewise discount the future quite heavily in their decision-making, and often worry much more about quarterly results. And this all gets even more complex when the focus needs to be global rather than parochial. Climate change will have a more serious impact in equatorial regions than in countries like yours and mine. So persuading politicians to address what may affect people in remote parts of the world 50 years from now is a big ask. I don’t see it happening.

One context in which we do maintain a near-zero discount-rate on the long-term future concerns the disposing of radioactive waste. We develop underground depositories planned to stay safe and secure for at least ten thousand years, or in the case of the now-abandoned Yucca Mountain depository, for a million years. Ironically, we plan that far ahead on waste disposal, but we can’t plan energy policy itself even 20 or 30 years ahead.

Here could you outline your book’s “pessimistic guess” on how we will in fact respond to climate change over the next 20 years, and how extended inaction might force more drastic interventions a few decades from now? And more generally, even as On the Future poses the rhetorical question “Why do governments respond with torpor to the climate threat,” and even with all the lamentable short-sighted or self-interested indifference to climate realities, when I return to your formulation of “ever-shortening timescales” impacting much longer “spans of biology,” I recall that the human species hasn’t had much time (evolutionarily, at least) to develop self-reflective ecological awareness, and probably will get much less time to adjust to escalating existential risks posed by further technological advances. So how could we not only overcome our present torpor on climate change, but treat this as a case study for how to leapfrog personal / societal / economic inclinations to torpor for those even more acute crises still to come?

If we could indeed deal with climate change, that would give a huge boost to the global psyche, as it were, and would allow us to feel that we could tackle other big problems. And we do need to recognize that, for the first time in history, enough of us have been empowered enough by technology to truly make a difference for the entire planet. You couldn’t say that 100 years ago. Of course humans then might have messed up their local environment or region, whereas now global interconnection means that if things go bad, if we alter the whole biosphere, then no continent will escape.

I am pessimistic about sufficient action being taken via the pledges made at the 2015 Paris conference and its follow-ups. I don’t see these initiatives cutting global CO2 emissions enough to avoid encroaching into what the experts consider dangerous territory — with a global temperature rise of two degrees or more, and the possibility of encountering drastic tipping points.

My book argues that the only win-win situation for dealing with climate change would be an accelerated program to improve all forms of carbon-free energy, with more solar and wind on efficient global-scale electric grids. And though this might be controversial, I think improved nuclear designs should also be part of the mix. Right now, our nuclear plants operate on designs 40 years out of date. We can do much better today. We’ll also need to develop stronger batteries and new forms of energy storage, with improved ways to distribute energy during periods of dark, or clouds, or when the wind doesn’t blow. We’ll need smart grids that can transfer energy from the sunny South to the cold dark North, and from east to west across the United States as peak demand shifts from one time zone to the next — and even more ambitiously, running all the way from Europe to China, as part of China’s Belt and Road Initiative. We need these big-scale enterprises, equivalent to building the world’s railways in the 19th century. Without that type of thinking, we can’t expect to prevent irreversible climate change.

My book also discusses a “Plan B.” If, 20 years from now, we have still failed to reduce carbon emissions, then we’ll face increasing pressure for panic measures, such as so-called geo-engineering initiatives. For example, it’s feasible to inject enough tiny particles (an “artificial volcano”) into the upper atmosphere to shield the Earth from a certain percentage of sunlight, thereby cooling it down. But we should consider these second-best approaches: feasible, but with their own unpredictable consequences, and still failing to address a variety of problems. Most geo-engineering proposals don’t deal with ocean acidification. They don’t reduce the increasing CO2 concentration in the atmosphere. So definitely geo-engineering might create more problems than solutions. The only beneficiaries might be international lawyers — they’d have a bonanza if nations could litigate over bad weather.

Still with catastrophic-risk scenarios in mind, I appreciate your focus on human-derived threats, rather than potential cosmic calamities — given that these human threats are the ones growing fast. Here, for example, could we return to possibilities for “bio error or terror,” again with broader global trends towards interconnectedness leaving human networks increasingly vulnerable to shock, with even small-scale bio-tech attacks or mistakes carrying the potential to negate years of more beneficent advances, with even a relatively minor bio-terror scenario potentially provoking maximum social panic, and thus with “The rising empowerment of tech-savvy groups (or even individuals)” posing “an intractable challenge to governments,” and aggravating “the tension among freedom, privacy, and security”? How could we most proactively constrain the anticipated consequences of such scenarios, and how might such prophylactic efforts by themselves already reshape everyday life in ways many people might regret or resist?

We’ve already seen small-scale examples where a group of people can cause a bio-tech or cyber-tech catastrophe that could cascade globally. We obviously should do all we can to minimize such risks, but we never can eliminate them when so many people have the capacities and the expertise. And this technology doesn’t require the types of specialized, conspicuous facilities required for building an atomic bomb. Even with intrusive surveillance, we can’t keep track of all the threats. So I anticipate a greater tension among the three goals of privacy, security, and freedom. I think we’ll have to accept more intrusive surveillance, in order to reduce the chance of some lone wolf causing catastrophe. And we’ll have to prepare for our interconnected societies growing increasingly fragile and brittle. For instance, if the electric grid went down and stayed down on the East Coast of the United States, that would lead to anarchy within a few days. Indeed, a 2012 report from the US Defense Department said that such an event could “merit a nuclear response” — if, of course, the perpetrator could be identified.

And a pandemic (whether natural or artificially induced) could lead to social breakdown quite quickly. There’s a contrast with the 14th-century bubonic plague, the Black Death. In many towns, half the population died from this plague, but the rest of the population fatalistically went on as before. Today, with a much more minor pandemic, with just one percent of the population affected, I sense we’d experience a true social breakdown, overwhelming the capacity of hospitals, with everybody clamoring for a kind of treatment that wouldn’t be available for all. All transport and supply chains would break down. And any such catastrophe would be likely to cascade globally — in contrast to the earlier episodes discussed, for instance, in Jared Diamond’s book Collapse.

Well when you bring in efficiency-maximizing hospital systems, or the increased centrality / vulnerability of incessant everyday commercial exchange (even as we discuss humanity’s dire need to enhance its cognitive, strategic, ethical, cooperative capacities for addressing challenges posed by technological advance still to come), I also wonder to what extent we need to speak of this driving force not just as abstracted “technological advance,” but more concretely as profit-seeking capitalism. So could you outline On the Future’s somewhat ambivalent account of capitalist incentives shaping futurist prospects (pointing warily, for instance, to data acquisition “already shifting the balance of power from governments to the commercial world,” but also appreciating how tech corporations’ incursions “into a domain long dominated by NASA and a few aerospace conglomerates” will more efficiently promote space exploration and travel — presumably still with some public regulation, but with “the impetus…private or corporate”)? What new norms of dynamic social responsibility do we need to coax forth from those capitalist-tech enterprises increasingly directing societal development, and what governmental, regulatory, public pressures can help push them to such self-reflective depths?

We definitely should build on international cooperation. We have the World Health Organization. We have the International Atomic Energy Agency. We may need additional global organizations like this to deal with the risks from bio-tech, from AI, etcetera. But also, as you say, we already see some of the biggest companies expanding their global reach. And on the other hand, bio-tech industries also can (and will have to) be a force for good. We’ll need new medicines from major pharmaceutical companies, and new means of production and distribution. And some corporations do take a much longer-term approach with their technological investments and research — which we certainly should welcome. At present, for instance, I would note the especially urgent need to develop new antibiotics. Over-use of existing antibiotics has built up microbial resistance that renders them ineffectual. To avoid a global emergency, we must incentivize Big Pharma to develop these new antibiotics.

But here again, the understandably shorter-term and more localized focus of democratically elected politicians also means that we need an energized and engaged public. Public campaigning on a big enough scale can push our politicians to address these much longer-term questions regarding the plight of the developing world, and climate change, and minimizing technological risks. Politicians do care about what shows up in their inboxes.

I also have known quite a few scientific advisors in government, who tend to get frustrated because their masters get consumed by urgent (and less urgent) short-term issues. But scientists can have an important effect when they engage directly with the public. I think of Carl Sagan as a prime example. We need more people like him who can “electrify” the public through their passion and eloquence. When the public is energized, its collective voice will make politicians respond. The anthropologist Margaret Mead famously averred that: “It takes only a few determined people to change the world. Indeed, nothing else ever has.” And if you think about the anti-slavery campaign, Black Power campaigns, gay-rights and environmental campaigns — they all found a way to get through to a wider public.

And so, perhaps in Carl Sagan fashion, I wonder if we could close on a few topics more closely related to your own work in astronomy. Returning, for example, to the topic of how capitalism shapes technological outcomes, I also think of your persistent formulation of “science” as a realm not just of purist intellectual conjecture, but of constant corollary engineering innovation. Could you offer a couple instances where some of the most exciting advances in current scientific thought stem primarily from mechanical / engineering breakthroughs?

I’m very mindful of that sort of symbiosis. Of course we’ve all learned in school about how most tech advances depend on science, and how Thomas Edison’s inventions depended on the earlier “pure” research of Michael Faraday and James Clark Maxwell. But this interdependence of intellectual discovery and more practical engineering also can go in the opposite direction. Indeed, in my own field of astronomy, advances have stemmed almost entirely from better instruments and better technology: from bigger telescopes, from better ways of detecting faint light (through adaptations of the technology used in smartphone cameras), and of course from the ability to send things up into space. My field has benefitted hugely from advances made by the consumer-electronics industry, and also from space technologies initially developed for defense purposes, and then for commercial purposes. Often the actual scientific discovery is the cheap bit, and developing the technological capacities is harder and more demanding. Armchair theorists can’t achieve much on their own.

Moreover, any discovery has both benign and damaging potential implications. Consider, for example, the laser. Its invention depended crucially on an idea Einstein had 40 years earlier. And even the people who invented the laser, around 1960, couldn’t have any guess about its future use both as a weapon and for eye surgery, or for DVD players. Neither the theorist nor the inventor can fully predict how a discovery will be applied. In pure science, the scientists themselves can best judge which questions are most crucial and timely to pursue, and how to tackle them. But choices on how these discoveries should be utilized involve commercial, ethical, and prudential considerations — and a wider public must be involved in those decisions. Scientists should take part, alongside many other types of citizens. At the same time, we can’t just put the brakes on science. We may however try to relinquish or slow down certain innovations, and strategically speed up others.

Here again, I definitely think of the potential “game-changer” of increased human malleability brought about through genetic and cyborgian enhancement: perhaps dissolving certain basic species continuities that have persisted across millennia, perhaps with the human species itself branching off as pragmatic adjustments to various interplanetary conditions get made, perhaps with Darwinian evolution as a whole eclipsed, and likely (you tell us) to produce “‘inorganics’ — intelligent electronic robots” eventually gaining dominance. I’d love to unpack that whole trajectory, but in terms of a specific question: your alternating references to “electronic” and “robotic” life made me sometimes picture these future beings as shiny C3PO-like humanoids, and sometimes conceive of these “electronic” entities more like present-day computer programs or networks. So would electronic life desire or need physical embodiment (at least on the scale we typically conceive of it) at all? And as some possible extensions: would such relatively disembodied electronic life require exponentially fewer material resources to power itself? And / or might it need inconceivably expanded infrastructural development in order for its electronic beings to circulate? Is it ever in fact easier to send an intelligent biological body far into unexplored space than a computerized entity, which might need a corresponding terminal or transmitting capacity on the other side?

Even that questions takes us decades, or maybe even centuries ahead. But I’d stress that the timescale on which such developments will occur is still exceedingly rapid compared to Darwinian evolution. It’s taken about four billion years for our biosphere to evolve from the primeval soup. And Darwinian selection requires a million years or more for a new species to emerge and evolve and maybe become extinct.

Future evolution, however, will happen not by Darwinian selection, but by what you might call secular intelligent design. Even by the end of this century, there may be substantial possibilities for human enhancement — and possibly for links between human brains and something electronic. Alternately, such developments might take centuries. But that would still be one part in 10 million of what the Earth has ahead of it, on a geological timescale.

We have no clear idea on whether electronic entities will take over completely. But we should recognize clear limits to what we can do with the wet hardware in our skulls. We still don’t really understand the extremely intricate networks interlinking neurons in our brain. But we know they operate about a million times slower than the electronic signals that propagate in a computer. A computer’s advantage of speed explains why an AI just given the rules to play chess can, within three hours, defeat the world champion. Added to this speed advantage, electronic intelligences have the potential to be immortal. And such intelligences may prefer not to live on a planet at all. They may not want an atmosphere. They might prefer zero gravity, so they might push further out into space.

But returning specifically to human enhancement, I do think we should regulate these technologies here on Earth, so that they don’t change our species too fast. Given, however, that scientific space exploration by humans seems less necessary and less useful now that robots can do most of what humans can do, my book also suggests that we need to reconceive human space travel as a cut-price, high-risk enterprise. We should cheer along the thrill-seeking adventurers who will want to get to Mars by the end of this century. And these people will be cosmically important, because they’re the ones most likely to spearhead a post-human era (since if they want to live on Mars, they’ll probably need to redesign themselves).

Humans are very well adapted to the Earth — which means not at all suited to conditions on Mars. So these settlers might want to change their progeny drastically if genetic technology allows. They may even want to download their intelligence into something electronic, if possible. This transition to some different species we would no longer call human seems most likely to happen away from the Earth, because those people on Mars will have the incentive, and will be further away from the regulators.

The question astronomers are perhaps most often asked is whether there are intelligent aliens already out there. Of course we don’t know. But my guess is that if we ever were to detect something artificial in space, we would probably detect something electronic. This argument comes out of considering the timeline for Earth’s own history. It first took about four billion years for technological intelligence to emerge, and since then has only taken a few millennia for us to develop the capability to travel beyond the Earth, and now with possibly just a few centuries before electronic intelligence usurps human life. And there are literally billions of years to follow. So unless some other Earth-like planet’s development has been completely synchronized with ours, the emergence of intelligent life elsewhere would have to be drastically behind, or ahead, of what has happened here. So if we detect some bizarre artificial transmission, or some artifact indicating some sort of extraterrestrial intelligence, it most likely won’t come from a civilization of organic creatures, but from some electronic entity perhaps remotely descended from some biological species like us.

Yeah I promise not to ask that ET question, but here as we continue progressing into speculative realms far beyond my capacity for comprehension, could we pick up your observation that research topics related to the origins of life (and thus to prospects for extraterrestrial life) no longer look too challenging for normative science to pursue? Could you describe where such research finds itself right now, and could you outline some of the most pressing questions it does pose?

Yes, the origin of life offers one good example, because although we’ve learned a huge amount about how life evolved from the simplest organisms to the intricate ecosystems of our biosphere (of which we are a part), the actual origins of life, the transition from complex molecules to something replicating and metabolizing, which we call “alive” — that is not yet understood. And I’d say that until 10 years ago, most scientists, quite rightly, put such questions in the “too difficult” box, and didn’t pursue them seriously. Scientists, like anybody else, don’t want to bang their heads against the wall for their entire working lifetime. But today you do see serious people working on bio-chemistry research that offers routes to theorizing the emergence of the first life.

Of course another crucial motivation comes from the astronomical discovery that most of the billions of stars in our own galaxy are orbited by planets, with many millions of planets (again just within this quite small cosmic sample) seeming rather like the Earth: approximating the size of the Earth, the temperature of the Earth, and probably having water on them, etcetera. And within the next 10 or 20 years, astronomers should be able to observe the nearest of these planets (not in great detail at first, but well enough to get some idea for whether they might have some sort of biosphere, some vegetation or some life on them). So again, origin of life presents a clear example of a scientific topic that has become mainstream — because people now think they actually can make progress and can test their ideas by, perhaps, finding some hospitable biosphere other than this only one we know.

And then more personally, in relation to scientific contributions made across your own career, could you make as clear as possible present-day prospects for the “fourth and grandest Copernican revolution,” the recognition of a multiverse within which “The entire panorama that astronomers can observe could be a tiny part of the aftermath of ‘our’ big bang, which is itself just one bang among the perhaps infinite ensemble”? Answering which questions would best help us to ascertain “whether or not we live in a multiverse,” and “how much variety its constituent ‘universes’ display”? And where does our most resonant allegorical language still need to cultivate a more communicable vision of the multiverse?

Well first I should just point to how amazingly gratifying it is that during the roughly 40 years that I’ve been active in astronomy, we’ve gone from not knowing whether a Big Bang ever had happened, to our current stage of being able to trace with confidence the outlines of cosmic history back 13.8 billion years, to a moment only a billionth of a second after the Big Bang. When the history of our last half-century of science gets written, that amazing progress will be understood as one of the high points. But if we now want to answer some more fundamental questions (such as why the universe keeps expanding in the way it does, or why it contains the particular mixture of atoms and radiation that we measure or infer), those answers still lie buried deep in this first billionth of a second — when everything would have been squeezed even so much denser, and particles would have moved around with higher thermal energy than any human experiment can produce even in the biggest particle accelerator. We still have no experimental foothold to give us guidance on the physics of those very early times. That’s the stumbling block for now.

Similarly, in order to make progress on understanding how big our universe is, or whether our Big Bang is the only one, we’ll need a major breakthrough in physics — of the type we had in the 20th century through gaining an understanding of gravity, relativity, and quantum theory. And we’ll also need to address how quantum effects and gravity interact in the hyper-dense early stage of the universe. Those who work on string theory (the most studied such “unified” theory) hope eventually to validate their theory by explaining why protons and electrons exist, and what their masses are, and so forth. If such a theory could successfully predict fundamental features of our everyday observable world, then we could take seriously its predictions even in domains we can’t observe. In particular, we could predict whether or not a Big Bang may replicate into many equivalent phenomena. And so we don’t yet know whether this theory would allow for the formation of a multiverse.

There’s a very definite and explicit model of the multiverse, called the model of eternal inflation, developed by Andrei Linde — a Russian cosmologist at Stanford. In this model, Big Bangs sprout all the time from some substrate. It’s a very powerful model, which works if the physics at this very early time have particular properties. Linde makes various assumptions about the physics for that early moment, and with some of those assumptions you’d get this eternal inflation with replicating Big Bangs, and with other assumptions you wouldn’t. If (50 years from now) we have a unified theory, corroborated by tests made in our low-energy world, we will know whether this data fulfills the requirements needed for Linde’s multiverse. If it does, then we would have reasons to take the multiverse seriously.

Finally then, returning to the confluence of abstract speculation and practical engineering, how might you most concretely bring in here the apparently more mechanical (though for me still imaginatively inconceivable) engineering challenge of designing a quantum computer which can “transcend the limits of even the fastest digital processor by, in effect, sharing the computational burden among a near infinity of parallel universes”?

Okay, but we’re now talking about another distinct conception of a multiverse, which comes from the so called “many-worlds” interpretation of quantum mechanics. In quantum mechanics, as you know, certain events remain unpredictable, and things can happen in different ways. According to Hugh Everett’s interpretation from the 1950s (which many now take seriously), when any quantum-level event happens the universe bifurcates, with both options taken up, as it were. So if in one universe the electron goes to the left, in the other it goes to the right. And so there’s an exfoliation of universes. That’s, in fact, one of the most attractive ways to interpret and confirm quantum theory. That’s different from the Linde multiverse. But it does indeed allow the quantum computer to transcend the limitations of a normal computer by doing many things at the same time — as it were, in a universe which keeps bifurcating with every quantum event.